Blizzard is experimenting with using an AI to moderate its games

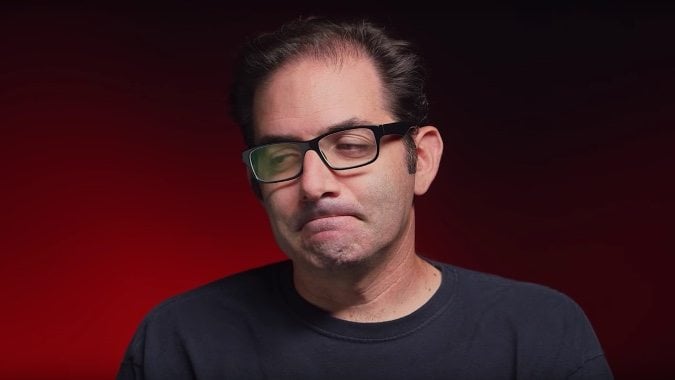

Blizzard has made it no secret it’s being aggressive in its battle against toxicity in Overwatch. They’ve discussed the issue repeatedly and recently joined an alliance of gaming giants to collaborate in their anti-toxicity efforts. And, in a recent interview with Kotaku, Game Director Jeff Kaplan revealed Blizzard has been experimenting with moderation via machine learning — teaching an AI to recognize toxic behavior in Overwatch.

“We’ve been experimenting with machine learning. […] We’ve been trying to teach our games what toxic language is, which is kinda fun. The thinking there is you don’t have to wait for a report to determine that something’s toxic. Our goal is to get it so you don’t have to wait for a report to happen.”

Jeff Kaplan also says Blizzard wants to explore their AI not only moderating what people say, but what people do:

“That’s the next step,” said Kaplan. “Like, do you know when the Mei ice wall went up in the spawn room that somebody was being a jerk?”

While I admire any and all efforts to reduce toxicity and harassment in games, I’m skeptical about putting this power in the hands of an AI. Exposing machine learning to poor behavior has a tendency to backfire. So far, machines haven’t proven themselves smart enough to consider the context and intricacies of human interaction, especially on a mass scale. Yes, it’s easy enough to teach a script to recognize and regulate slurs. But any additional complication could go wildly awry.

Take Kaplan’s Mei ice wall as an example. If a stranger is being abusive with their ice walls and ruining their teammates’ day, that’s worth actioning, obviously. However, someone might just as easily be goofing around with their friends. In that scenario, nobody is being hurt — they’re all having a laugh. Will Blizzard’s AI be able to tell the difference? Maybe, maybe not, but it’s something the AI will need to take into consideration to be effective.

And if the AI isn’t smart enough, there’s also the potential for helpful players to be caught in the crossfire. Let’s say, for example, the AI recognizes slurs and actions against them. With no human to consider the context, the player trying to explain why something shouldn’t be said could receive as much action against them as the person who was doing it to begin with.

Certainly, I’m willing to give Blizzard the benefit of the doubt here. I don’t think they’d release this system into the wild if it was going to ban their players willy-nilly. But at the same time, I’m not sure an AI will be able to moderate human interactions better than a human would, even if it’s only meant to find the worst, most egregious examples of bad behavior.

Please consider supporting our Patreon!

Join the Discussion

Blizzard Watch is a safe space for all readers. By leaving comments on this site you agree to follow our commenting and community guidelines.

@AlexZiebart

@AlexZiebart